Recap so far

In a previous post, I discussed why we need secrets management for our applications and some of the possible solutions available to us.

Now that we know the "theory", it's time to put that knowledge into practice.

In this follow up post, I'll show how you can easily implement secrets management for a containerized application running on Amazon Elastic Container Service (ECS). Let's get started.

Amazon Elastic Container Service (ECS) and secrets management

Amazon ECS enables you to inject sensitive data into your containers stored in either AWS Secrets Manager secrets or AWS Systems Manager Parameter Store parameters and then referencing them in your container definition. This feature is supported by tasks using both the EC2 and Fargate launch types.

Hmmm... but what about EKS?

Kubernetes does have its native Secrets objects, which are used for storing and managing sensitive information. Storing secrets in a Secrets object is much safer than putting it verbatim in a Pod definition or burned into a container image.

However, there is currently no direct integration between Amazon Elastic Kubernetes Service (EKS) and Parameter Store or Secrets Manager. If your containers are leveraging Kubernetes on AWS, you'll need alternative methods for secrets handling.

Fortunately, others have developed solutions to extend Kubernetes Secrets to include the concept of ExternalSecrets. For example, there is GoDaddy's open source project, which injects sensitive data managed by an external system, such as Parameter Store or Secrets Manager, into Kubernetes secrets.

Three types of sensitive data injection for ECS

With ECS, secrets can be exposed to a container in the following three ways.

1. Container secrets as environment variables

This is the method you will most likely use. Using this type of injection, sensitive information will be exposed as environment variables that are isolated to the target container.

To inject the secrets, you specify parameters in the task definition file as name/value pairs. The name portion specifies the environment variable name and the value portion references the Amazon Resource Name (ARN) of the secret (either a Secrets Manager ARN or a Parameter Store ARN).

The ARN must be in the same account as the running container (but can be in a different region). With Parameter Store secrets, you don't have to use the full ARN if it is hosted in the same region - you can simply use the parameter name. But, you should consider always using the full ARN so there is consistency across all your task definition files, regardless of where the secrets are stored. This will reduce copy/paste errors as you add or update secrets for your containers.

Task definition example for container secrets as environment variables

After this container is started, there will be two environment variables named

MY_SECRETandANOTHER_SECRET, which contain the specified values from Secrets Manager and Parameter Store.

{

"containerDefinitions": [{

"secrets": [{

"name": "MY_SECRET",

"valueFrom": "arn:aws:secretsmanager:region:aws_account_id:secret:secret_name-AbCdEf"

},{

"name": "ANOTHER_SECRET",

"valueFrom": "arn:aws:ssm:region:aws_account_id:parameter/parameter_name"

}]

}]

}

2. Sensitive data for log configuration

Use this method when you need to specify secret information as part of log configuration, such as when using a third-party logging service like Splunk.

The secret information for the log configuration gets specified using the secretOptions parameter. The sensitive data then gets passed to the logging driver as part of the options data.

Task definition example for log configuration

{

"containerDefinitions": [{

"logConfiguration": [{

"logDriver": "splunk",

"options": {

"splunk-url": "https://cloud.splunk.com:8080"

},

"secretOptions": [{

"name": "splunk-token",

"valueFrom": "arn:aws:secretsmanager:region:aws_account_id:secret:secret_name-AbCdEf"

}]

}]

}]

}

3. Private registry credentials

Use this type of sensitive data injection when you need to access private repositories that require credentials, such as Docker Hub and JFrog Artifactory.

Note that this does not apply when accessing private repositories hosted in Elastic Container Registry (ECR). ECR relies on IAM roles for secure access to private repositories.

To use this feature, you first create a secret in Secrets Manager that contains your private registry credentials in the following format:

{

"username" : "privateRegistryUsername",

"password" : "privateRegistryPassword"

}

The private registry credentials then get specified using the Secrets Manager ARN as the credentialsParameter in the repositoryCredentials section of the task definition file.

Task definition example for private registry credentials

"containerDefinitions": [

{

"image": "private-repo/private-image",

"repositoryCredentials": {

"credentialsParameter": "arn:aws:secretsmanager:region:aws_account_id:secret:secret_name"

}

}

]

How it works

Injection at container startup only

When starting your container, ECS will process any secrets directives found in the task definition file and make calls on your behalf to Systems Manager Parameter Store and/or Secrets Manager. Container startup is the only time when ECS will inject sensitive data into your container.

This means that your container will not automatically receive any subsequent updates to sensitive data, such as when credentials are rotated. In order to receive the updated sensitive data, you must launch a new container.

TIP: You can use the "Force new deployment" option for ECS services to recycle all containers without creating a new task definition file.

Note that AWS recently launched AWS AppConfig, which is a new feature to deploy configurations across applications in a validated, controlled and monitored way. Configuration can be stored either as a Systems Manager Document or a single Parameter Store parameter.

At first glance, it may appear that AppConfig would help solve the problem of updating containers when secrets are changed. Unfortunately, however, applications must poll for changes when using AppConfig. This requires custom code in the application to poll for changes and then reload of the configuration.

Required configuration and IAM permissions

Note that during this injection of sensitive data, ECS is making calls to Systems Manager Parameter Store and Secrets Manager on your behalf. In order to make those calls, the ECS agent uses the ECS Task Execution IAM Role (ecsTaskExecutionRole).

Therefore, when specifying secrets in your task definition file, you must also ensure to specify the ecsTaskExecutionRole parameter with a valid role ARN that has the proper permissions to make calls to Parameter Store and/or Secrets Manager.

Also, if you are using sensitive data for log configuration and the EC2 launch type, you will need to update the ECS agent configuration (the "./etc/ecs/ecs.config" file) to specify the following flag:

ECS_ENABLE_AWSLOGS_EXECUTIONROLE_OVERRIDE=true

Putting it all together - implementation steps

Now that we know how ECS injects sensitive data into our containers, let's walk through the implementation steps to make this all work.

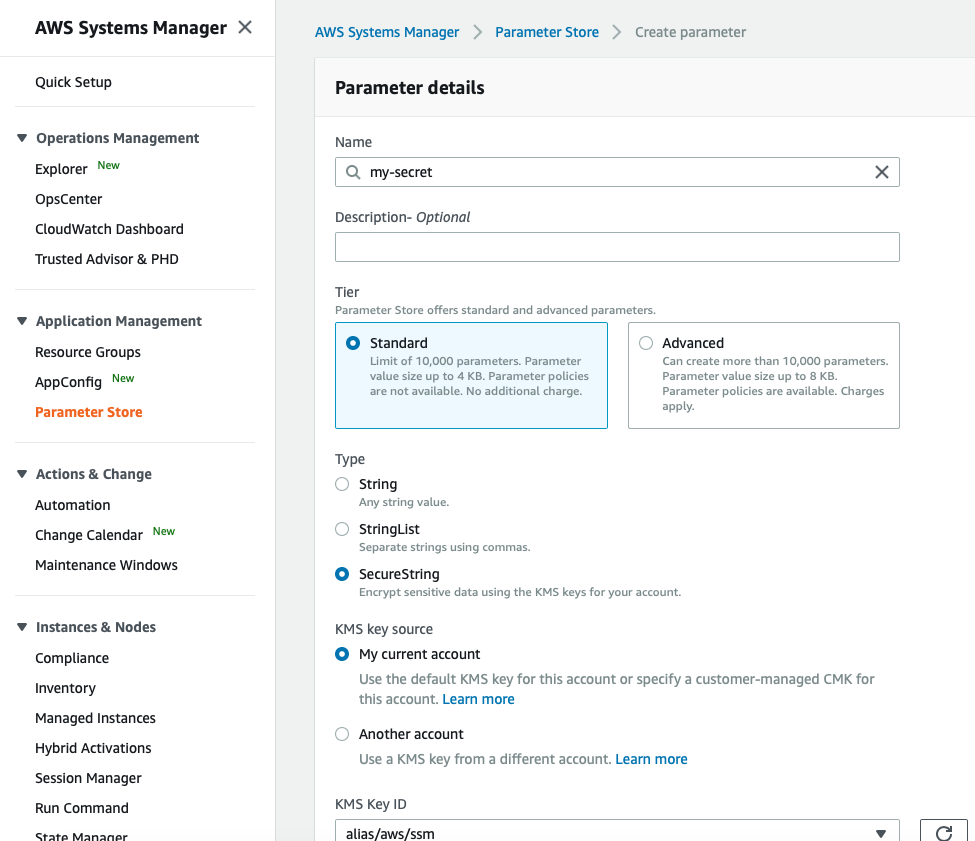

1. Store secret

First we need to store the sensitive data in either Systems Manager Parameter Store or Secrets Manager. You can do this by using the AWS Console, using the AWS Command Line Interface (CLI) or by making a direct API call.

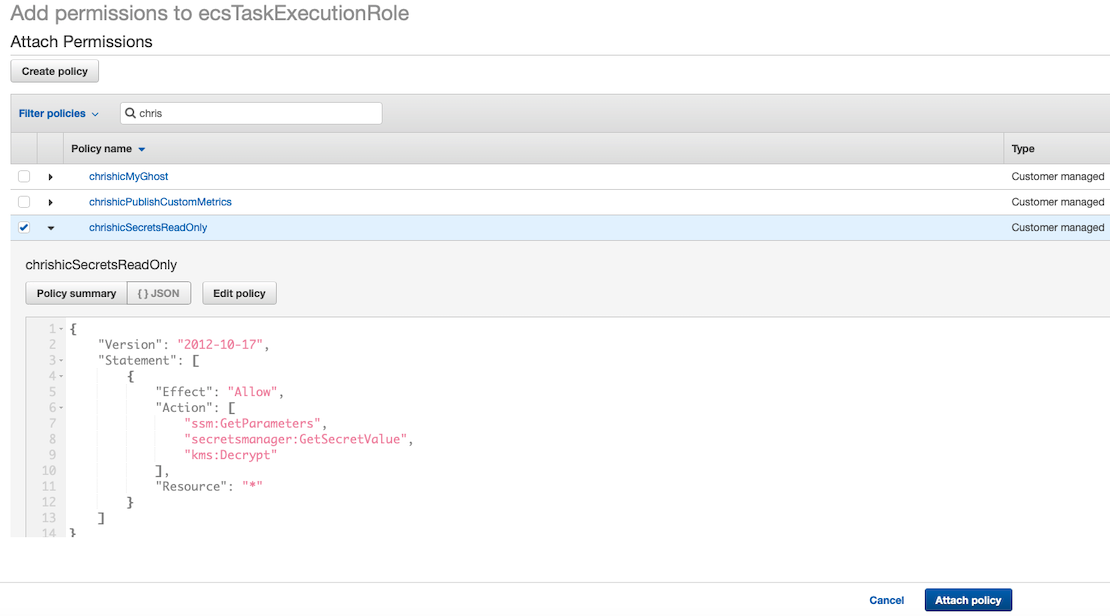

2. Configure the ECS Task Execution role

Next, we need to ensure that the ECS Task Execution role has permissions to make calls to Systems Manager Parameter Store or Secrets Manager.

Warning: If the ECS Task Execution role doesn't have the correct permissions, the container will fail to start (i.e. "hard fail") and you'll see an error message similar to the following:

Stopped reason Fetching secret data from AWS Secrets Manager in

region us-west-2: secret arn:aws:secretsmanager:secret:/my-secret:

AccessDeniedException: User: arn:aws:sts:assumed-role/ecsTaskExecutionRole

is not authorized to perform: secretsmanager:GetSecretValue on

resource: arn:aws:secretsmanager:secret:/my-secret

The ECS Task Execution role will need permission to the following actions:

ssm:GetParameters- if using Systems Manager Parameter Storesecretsmanager:GetSecretValue- if using Secrets Managerkms:Decrypt- if your secret uses a custom KMS key (i.e. not using the default encryption key)

In order to give the ECS Task Execution role these permissions, create a new IAM policy and then attach this policy to the ECS Task Execution role. As a best practice, you should also consider explicitly specifying the resources (parameters, secrets, CMKs) that can be accessed.

Example Task Execution Role Inline Policy

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"ssm:GetParameters",

"secretsmanager:GetSecretValue",

"kms:Decrypt"

],

"Resource": [

"arn:aws:ssm:<region>:<aws_account_id>:parameter/parameter_name",

"arn:aws:secretsmanager:<region>:<aws_account_id>:secret:secret_name",

"arn:aws:kms:<region>:<aws_account_id>:key/key_id"

]

}

]

}

TIP: Parameter Store supports hierarchies directly and you can specify wildcards in resource ARNs, such as

arn:aws:ssm:us-west-2:123456789012:parameter/prod-*.

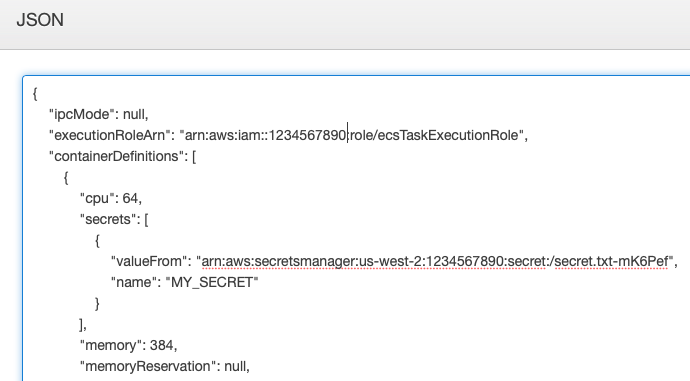

3. Update task definition file

The last step is to update the task definition file for our container. After specifying the secrets to be injected (using one or more of the three available options described above), we then set the ecsTaskExecutionRole parameter to the ARN of the ECS Task Execution role you configured.

Note: If you specify secrets injection in your task definition, but leave ecsTaskExecutionRole unspecified, you will get an error when trying to save the the task definition.

After updating the task definition, deploy it as a new task revision. After deployment is complete, your containers will now have the specified sensitive data injected into them, securely, using Parameter Store and/or Secrets Manager. To verify, you can SSH into a running container and view its environment variables (env command).